Data analysis doesn’t readily fall into the typical data center operator’s job description. That fact, and the traditional hands-on focus of those operators, isn’t likely to change soon.

But turning a blind eye or ignoring the floodgate of data now available to data centers through IoT technology, sensors and cloud-based analytics is no longer tenable. While the data impact of IoT has yet to be truly realized, most data centers have already become too complex to be managed manually.

What’s needed is a new role entirely, one with dotted line/cross-functional responsibility to operations, energy, sustainability and planning teams.

Consider this. The aircraft industry has historically been driven by design, mechanical and engineering teams. Yet General Electric aircraft engines, as an example, throw off terabytes of data on every single flight. This massive quantity of data isn’t managed by these traditional teams. It’s managed by data analysts who continually monitor this information to assess safety and performance, and update the traditional teams who can take any necessary actions.

Like aircraft, data centers are complex systems. Why aren’t they operated in the same data-driven way given that the data is available today?

Data center operators aren’t trained in data analysis nor can they be expected to take it on. The new data analyst role requires an understanding and mastery of an entirely different set of tools. It requires domain-specific knowledge so that incoming information can be intelligently monitored and triaged to determine what constitutes a red flag event, versus something that could be addressed during normal work hours to improve reliability or reduce energy costs.

It’s increasingly clear that managing solely through experience and physical oversight is no longer best practice and will no longer keep pace with the increasing complexity of modern data centers. Planning or modeling based only on current conditions – or a moment in time – is also not sufficient. The rate of change, both planned and unplanned, is too great. Data, like data centers, is fluid and multidimensional.

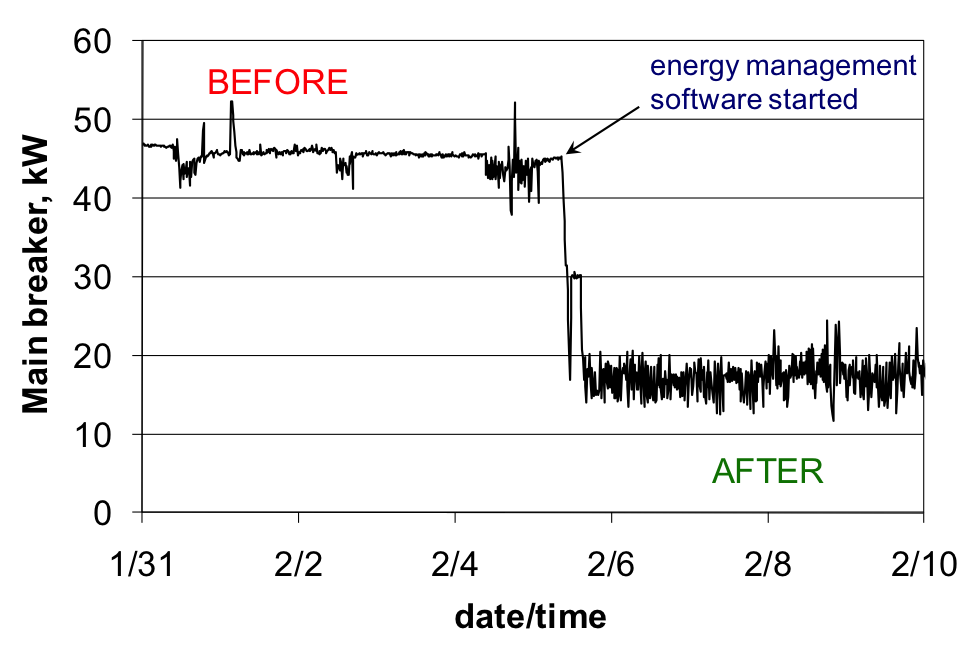

Beyond the undeniable necessity of incorporating data into day-to-day operations to manage operational complexity, data analysis provides significant value-added benefit by revealing cost savings and revenue generating opportunities in energy use, capacity and risk avoidance. It’s time to build this competency into data center operations.