Why Machine Learning-based DCIM Systems Are Becoming Best Practice.

Here’s a conundrum. While data center IT equipment has a lifespan of about three years, data center cooling equipment will endure about 15 years. In other words, your data center will likely undergo five complete IT refreshes within the lifetime of your cooling equipment – at the very least. In reality, refreshes happen much more frequently. Racks and servers come and go, floor tiles are moved, maintenance is performed, density is changed based on containment operations – any one of which will affect the ability of the cooling system to work efficiently and effectively.

If nothing is done to re-configure cooling operations as IT changes are made, and this is typically the case, the data center develops hot and cold spots, stranded cooling capacity and wasted energy consumption. There is also risk with every equipment refresh – particularly if the work is done manually.

There’s a better way. The ubiquitous availability of low cost sensors, in tandem with the emerging availability of machine learning technology, is leading to development of new best practices for data center cooling management. Sensor-driven machine learning software enables the impact of IT changes on cooling performance to be anticipated and more safely managed.

Data centers instrumented with sensors gather real-time data which can inform software of minute-by-minute cooling capacity changes. Machine learning software uses this information to understand the influence of each and every cooling unit, on each and every rack, in real-time as IT loads change. And when loads or IT infrastructure changes, the software re-learns accordingly and updates itself, ensuring that the accuracy of its influence predictions remains current and accurate. This ability to understand cooling influence at a granular level also enables the software to learn which cooling units are working effectively – and at expected performance levels – and which aren’t.

This understanding also illuminates, in a data-supported way, the need for targeted corrective maintenance. With a clearer understanding and visualization of cooling unit health, operators can justify the right budget to maintain equipment effectively thereby improving the overall health and reducing risk in the data center.

In one recent experience at a large US data center, machine learning software revealed that 40% of the cooling units were consuming power but not cooling. The data center operator was aware of the problem, but couldn’t convince senior management to expend budget because he couldn’t quantify the problem nor prove the value/need for a specific expenditure to resolve the issue. With new and clear data in hand, the operator was able to identify the failed CRACs and present the appropriate budget required to fix and replace them accordingly.

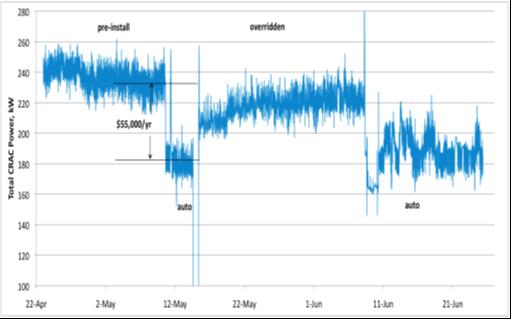

This ability to more clearly see the impact of IT changes on cooling equipment enables personnel to keep up with cooling capacity adjustment and, in most cases, eliminate the need for manual control. A reduction of the corresponding “on-the-fly, floor time corrections” also frees up operators to focus on problems that require more creativity and to more effectively manage physical changes such floor tile adjustments, etc.

There’s no replacement for experience-based human expertise. However, why not leverage your staff to do what they do best, and eliminate those tasks which are better served by software control. Data centers using machine learning software are undeniably more efficient and more robust. Operators can more confidently future proof themselves against inefficiency or adverse capacity impact as conditions change. For these reasons alone, use of machine learning-based software should be considered an emerging best practice.