The Internet of Things holds the unprecedented opportunity to improve the long-standing conflict between facilities, IT and sustainability managers. Traditionally, these three silos are orthogonal, and don’t share each other’s priorities.

Data generated from more granular sensing in data centers reveals information that has traditionally been difficult to access, and not easily shared between groups. This data can provide both an incentive and a means to work together by establishing a common source for business discussions. This concept is becoming increasingly important. As Bill Kleyman said in a Data Center Knowledge article projecting Data Center and Cloud Considerations for 2016: “The days of resources locked in silos are quickly coming to an end.” We agree. While Kleyman was referring to architecture convergence in the reference we believe his forecast applies equally forcefully to data. Multi-group access to more comprehensive data has collaborative power. IoT contributes to both the generation of such data and the ability to act on it, instantaneously.

Consider the following examples of how IoT operations can accelerate decision-making and collaboration between IT and Facilities.

IT Expansion Deployments

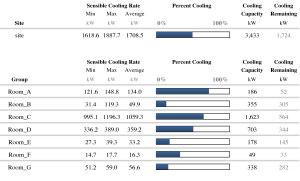

As service shifts to the network edge, or higher traffic is needed for a particular geographic region, IT is usually tasked to identify the most desired sites for these expansions. In bigger companies, the possible sites can number 50 or more. IT and Facilities need to quickly determine a short list.

A highly granular view of the actual (versus designed) operating cooling capacity available in each of the considered sites would greatly speed and simplify this selection. With operating cooling capacity information readily in hand, facilities can easily create a case for the most attractive sites from a cost and time perspective, and/or create a business case for the upgrades necessary to support IT’s expansion deployments.

Data can expose previously hidden or unknowable information. Capacity planners are provided with the right information for asset deployment in the right places, faster and with less expense. Everyone gets what they want.

Repurposing capital assets

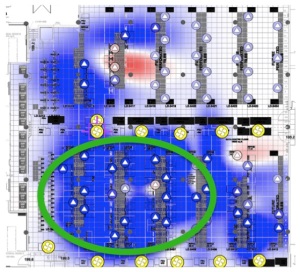

After airflow is balanced, and redundant or unnecessary cooling is put into standby through automated control, IT and facilities can view the real-time amount of cooling actually available in a particular area. It becomes easy to identify rooms that have way more cooling than needed. The surplus cooling units can be moved to a different part of the facility, or to a different site as needed.

IoT powered by smart software can thus expose inefficient capital asset allocation. Rather than spending money on new capital assets, existing capital can be moved from one place to another. This has huge and nearly instant financial benefits. It also establishes a method of cooperation between the facilities team that is maintaining the cooling system and the IT team that needs to deploy additional IT assets and that is tasked with paying for additional cooling.

In both situations, data produced by IoT becomes the arbiter and the language on which the business cases can be focused.

Data essentially becomes the “neutral party.”

All stakeholders can benefit from IoT-produced data to make rational and mutually understood decisions. As more IoT-based data becomes available, stakeholders who use it to augment their intuition will find that data’s collaborative power is profitable as well as insightful.